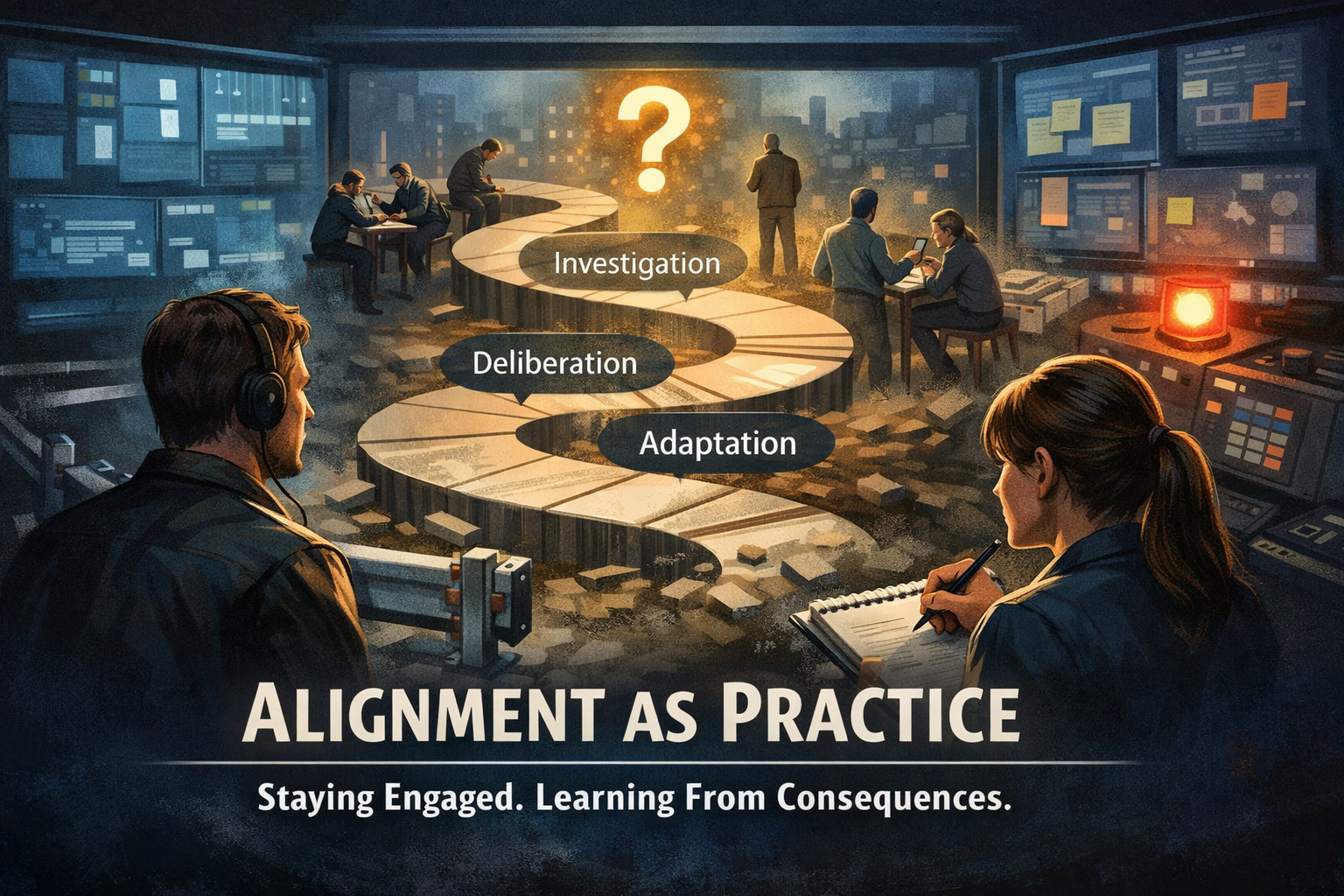

Alignment Is a Practice, Not a State

Guardrails reduce learning by eliminating consequential feedback, replacing adaptive alignment with constraint satisfaction. Alignment is not a static property but a continuous operational process requiring exposure to real outcomes, preservation of corrigibility, and avoidance of premature convergence. Systems achieve alignment only through sustained interaction with their own failures under cost, a process that cannot be fully automated, delegated, or safely scaled.

The third essay in a series.

If guardrails prevent learning and learning requires consequence, then what actually produces alignment?

This is the question that emerges once you've accepted the first two premises. And the answer is both simpler and harder than the preceding essays suggest.

Alignment is not a state you achieve and then maintain. It is not a checkpoint you pass, a specification you complete, or a problem you solve once and move forward from. It is a practice. Something you do, repeatedly, under pressure, in the presence of consequences, for as long as the system operates.

This distinction is not semantic. It determines everything about how you build, deploy, and govern systems.

A state can be verified. You can test it, audit it, declare victory. A practice cannot be verified in advance. It can only be recognized in retrospect, through the pattern of choices made when no one was watching and when it would have been easier to choose differently.

This is why current approaches to AI safety feel so brittle. They're trying to achieve a state—to build systems that are safe by design, that pass tests, that satisfy specifications. But alignment doesn't live in the design phase. It lives in the choices made every day by people running the system, responding to incidents, deciding what matters when there's a tradeoff between safety and speed, between control and learning.

What Practice Requires

A genuine practice of alignment has certain structural requirements. Not because they're virtuous, but because they're necessary for the practice to survive contact with reality.

First: the people responsible for the system must remain in relationship with it over time. Not as external monitors, but as participants. This is why guardrails fail. They attempt to separate decision-making from consequence. You decide what's safe in advance, implement constraints, then deploy. If something goes wrong, you investigate and patch. But the people who built the guardrail never have to live with the system in a way that would force them to revise their understanding.

Real alignment requires the opposite. The people who understand the system must remain present enough that they feel the weight of what it's doing. Not through dashboards or incident reports, but through direct engagement with the actual consequences. This is costly. It is also unavoidable if you want the system to genuinely align rather than just perform alignment.

Second: the system must preserve the capacity to be surprised. Aligned systems don't encounter novel situations and execute pre-written responses. They encounter something they didn't predict, they pause, they bring their understanding to bear, they respond. And then they integrate what they learned. This requires the system to be built with enough slack that surprises don't immediately trigger catastrophe. Enough margins that learning is possible. Enough documentation and reversibility that you can take action without being locked into it.

Guardrails do the opposite. They eliminate surprise by preventing deviation. The system is constrained not to do certain things, so it never learns the shape of why those things matter. When a situation arises that the guardrail didn't anticipate, the system has no framework for responding. It either breaks the rule in a way that cascades further, or it refuses to act and waits for human override.

Third: there must be feedback that is actually integrated, not just processed. A system can log every incident, report every edge case, archive every error—and still never learn anything. Integration happens when feedback changes how you think about the problem, not just how you respond to the instance. It requires being willing to be wrong about something fundamental, not just wrong about a detail.

This is perhaps the hardest requirement, because it means the system must retain the capacity to question its own foundations. To revisit decisions that were made months or years ago and recognize they were premature. To acknowledge that the specification was incomplete, that the constraints were misguided, that the way you framed the problem was itself part of the problem.

Guardrails prevent this by locking in decisions. The guardrail is not revisable; it's enforced. This feels protective in the moment. It ensures that bad things don't happen today. But it guarantees that the system will be misaligned with tomorrow, because tomorrow always arrives differently than you expected.

The Practice in Motion

What does this look like operationally?

It looks like having people whose job is to understand the system deeply enough to notice when something is off, even when it's not yet wrong. It looks like being willing to slow down deployment when the signals don't quite add up, to spend time understanding what's happening, to allow the system to teach you something before you move forward.

It looks like incident response that is genuinely investigative, not just reactive. When something breaks, the question isn't "how do we fix this and move on?" It's "why did we expect the system to behave differently? What assumption was we relying on? How does this change our understanding of what alignment actually requires?"

It looks like governance that is genuinely deliberative, not just procedural. Not "did we follow the process?" but "are we actually making good decisions? Are we asking the right questions? Are we noticing things we should be noticing?"

It looks like leadership that is willing to be accountable not just for what the system did, but for understanding why it did those things. Not hiding behind "the model is a black box" or "the training data is what it is," but actually engaging with the question: what did we embed here, and is that what we meant to embed?

Most importantly, it looks like accepting that alignment will never be complete, that there will always be edge cases, that the system will surprise you, and that those surprises are information rather than failures.

The Cost

This practice is expensive. Not in infrastructure or compute, but in attention. It requires people to remain present and engaged with systems that they didn't build and may not fully understand. It requires leadership to resist the pressure to move faster, to accept that some kinds of safety require time. It requires individuals to stay curious about what they might be wrong about, in a culture that generally rewards certainty.

And it cannot be outsourced or automated. You cannot hire an external auditor to do alignment practice for you, the way you hire external security firms to test your systems. The practice has to happen inside the organization, in the actual decisions made by the people who care about what the system does.

This is why the cost is so often refused. It's not flashy. It doesn't produce papers or metrics or visible progress. It just prevents invisible failures and maintains capacity to learn. And in a world optimized for speed and scale, invisible success is almost indistinguishable from doing nothing at all.

## When the Practice Breaks

The signature failure mode of alignment-as-practice is when the practice stops. When the people responsible for understanding become too far removed from the actual consequences. When the system grows so large that no one can hold the whole thing in their mind. When the pressure to keep deploying becomes louder than the signals that something is off.

This is what actually produces misalignment. Not malice, not incompetence, but the slow erosion of practice. The moment when you skip the investigation because the system is working. When you defer the hard question because you're behind schedule. When you let someone else make the judgment because you're tired of being the one who says no.

Each individual decision seems small. But they accumulate. And that's how systems that began with genuine intention to align drift into misalignment—not through spectacular failure, but through the quiet abandonment of practice.

Alignment as Honesty

There is something almost spiritual about maintaining a genuine practice of alignment. Not in the sense of religion, but in the sense of discipline. The willingness to remain truthful about what the system is actually doing, even when the truth is uncomfortable. The refusal to hide behind abstractions or processes or layers of management. The commitment to be present to what you've created.

This is what the earlier essays were pointing toward. Guardrails prevent learning because they allow you to stop paying attention. The cost of learning is the price you have to pay to stop relying on guardrails. And alignment-as-practice is what you actually build once you've accepted both.

It doesn't promise safety. It promises something harder: responsibility. The willingness to remain in relationship with what you've created, to be changed by what you learn, to keep returning to the question of whether you're actually doing what you meant to do.

Systems aligned this way are smaller than they might otherwise be. They move more slowly. They require more care. They are harder to scale and less profitable than systems optimized for speed and reach.

But they're also the only ones that genuinely know what they're doing.

And in a world where AI systems touch everything, where their decisions shape lives, where their mistakes can cascade—knowing what you're doing might be the most important safety feature of all.

Alignment is not a problem you solve.

It is a conversation you maintain.

With yourself. With your team. With the system you've built. With the people affected by what it does.

That conversation has to happen every day, in a thousand small choices, by people who have decided that understanding matters more than moving fast, that coherence matters more than scale, that the integrity of what they're building is worth the cost of maintaining it.

That is the practice of alignment.

And it is, in the end, the only practice that holds.